GPUs are specialized chips that do thousands of simple calculations simultaneously, making them perfect for AI training.

- Originally built for gaming graphics but ideal for AI's parallel math

- Like having 1,000 people chopping onions versus one person with a fancy knife

- Essential for modern AI - ChatGPT would take centuries to train on regular chips

- Most people rent GPU time from cloud providers rather than buying

GPUs are why AI went from research labs to your daily workflow.

What is a GPU?

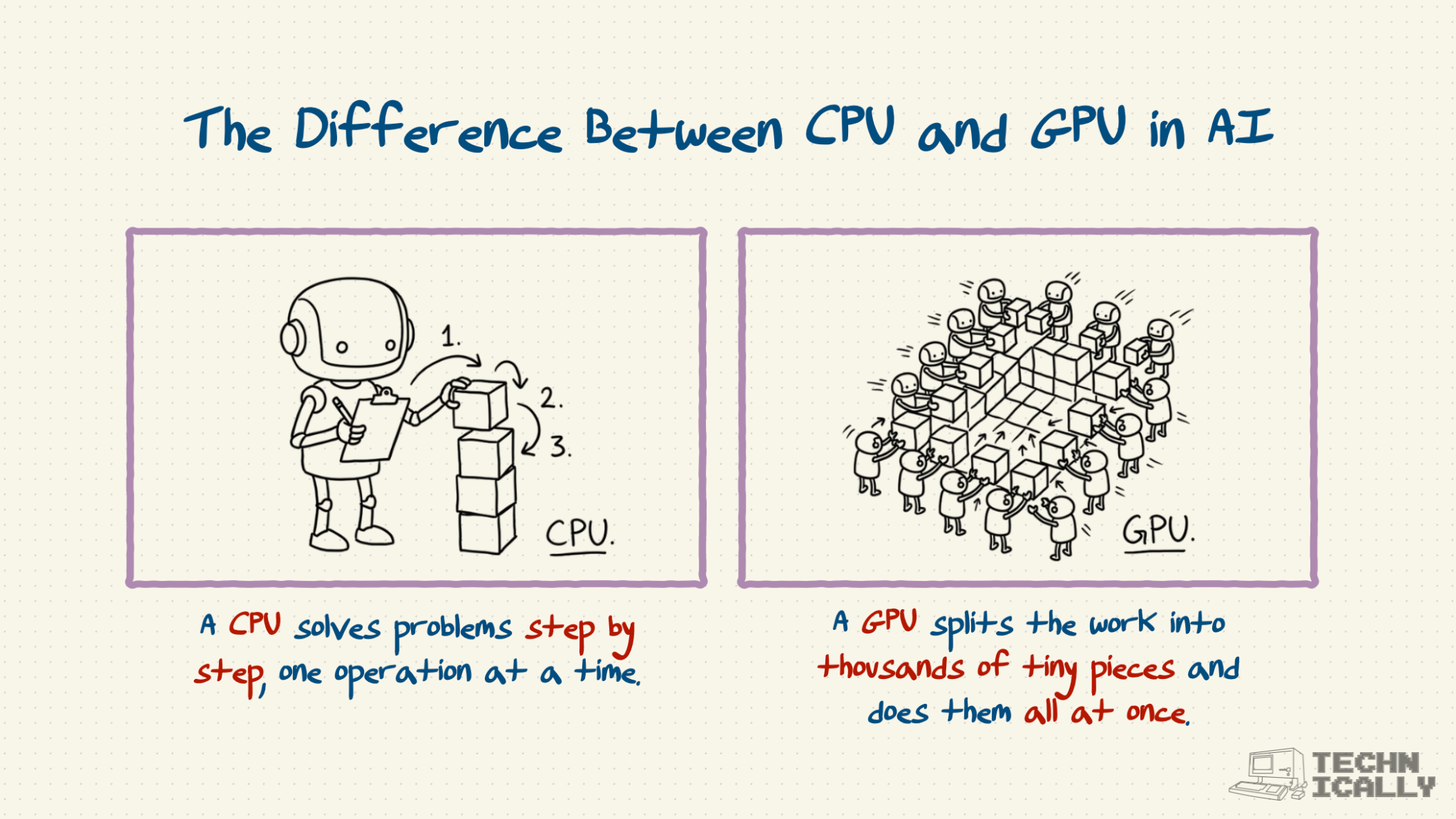

A GPU (Graphics Processing Unit) is a specialized computer chip designed for parallel processing - doing thousands of simple calculations at the same time. While your laptop's CPU is great at complex tasks done in sequence, GPUs excel at simple tasks done in massive parallel.

Originally built for rendering video game graphics (displaying millions of pixels simultaneously), GPUs turned out to be perfect for AI training, which requires millions of simple mathematical operations.

Why do AI models need GPUs?

It turns out that another computing task that benefits from parallel processing is training and running AI models. For all of their complications, today's GenAI models are basically just a lot of simple math.

An AI model is basically a bunch of math. The mechanics of this "equation" are what's called a neural network - tons and tons of neurons, each firing to transmit signals and data. Today's neural nets have billions, if not trillions, of these little neurons.

So what is a neuron, exactly? You can think of it as a tiny piece of the equation. Each one is very simple: all it does is take a number, perform some mathematical calculation, and then spit it out the answer.

The massive neural network equation is a perfect candidate for parallel computing. It's made up of billions of individual components that themselves are incredibly simple, like slicing an onion. If you can do more than one of these at a time, things would get a lot faster.

What's the difference between CPU and GPU for AI?

This onion-mandoline effect, as I've coined it, is called parallel computing. Instead of having a task done all by one worker, breaking it down into different parts and having multiple workers work on it at once. This is what GPUs excel at: doing pretty simple things, but at massive scale and speed.

- CPU: One very smart person doing complex tasks in order

- GPU: 1,000 people doing simple tasks simultaneously

For AI training, you need the GPU approach.

Can you train AI without GPUs?

Technically yes, but practically no for serious AI work. Training GPT-3 on CPUs would take about 355 years. On GPUs, it took 25 days.

Most AI education and development now assumes GPU access, even if rented from cloud providers.

How much does a GPU compute cost?

Most people rent rather than buy:

- Cloud rental: $0.50-8 per hour depending on GPU type

- Purchase costs: $10,000-40,000 for professional AI GPUs

- Training costs: Small chatbot ($10-100), large model ($1-10 million)

For most businesses, cloud rental makes more sense unless you're doing AI work continuously.

What are the best GPUs for AI?

- Professional: NVIDIA H100 (current top), A100 (previous gen), V100 (older but capable)

- Consumer: RTX 4090 (best for hobbyists), RTX 4080/4070 (good for learning)

NVIDIA dominates because of CUDA software ecosystem, not just hardware performance.

Why are GPUs so expensive and hard to get?

One reason it's hard to get a GPU is that it's hard to make a GPU.

Chips are notoriously difficult to manufacture. They require an incredible degree of specialized expertise, lots and lots of capital, and a factory that's going to make them for you, of which there are very few out there.

And then there's the fact that of the limited supply that they make, most of NVIDIA's stock doesn't go to consumers: it goes to data centers. Data center (think: AWS, GCP, etc.) is 90% of NVIDIA's revenue, so if you want a GPU for AI, you will need to rent it from a cloud provider.

Frequently Asked Questions

Do I need to understand GPU programming to use AI?

Nope. Modern AI frameworks like PyTorch and TensorFlow handle the GPU programming for you. You just tell them to use the GPU instead of CPU.

What's CUDA and why does it matter?

CUDA is NVIDIA's software that lets programs use their GPUs efficiently. Most AI tools are built on CUDA, which is why NVIDIA dominates AI computing despite having hardware competitors.

Are there alternatives to NVIDIA GPUs?

AMD makes GPUs but with limited AI software support. Google (TPUs), AWS (Inferentia), and startups like Groq are building AI-specific chips that can be faster than GPUs, but they're not widely available.

Can I use gaming GPUs for AI?

Yes, but with limitations. Gaming GPUs work for learning and small projects but lack the memory and specialized features needed for large-scale AI work.

What's the future of GPUs for AI?

More competition from specialized AI chips, but GPUs will likely remain dominant due to their flexibility and software ecosystem. Expect continued shortages as AI adoption grows.