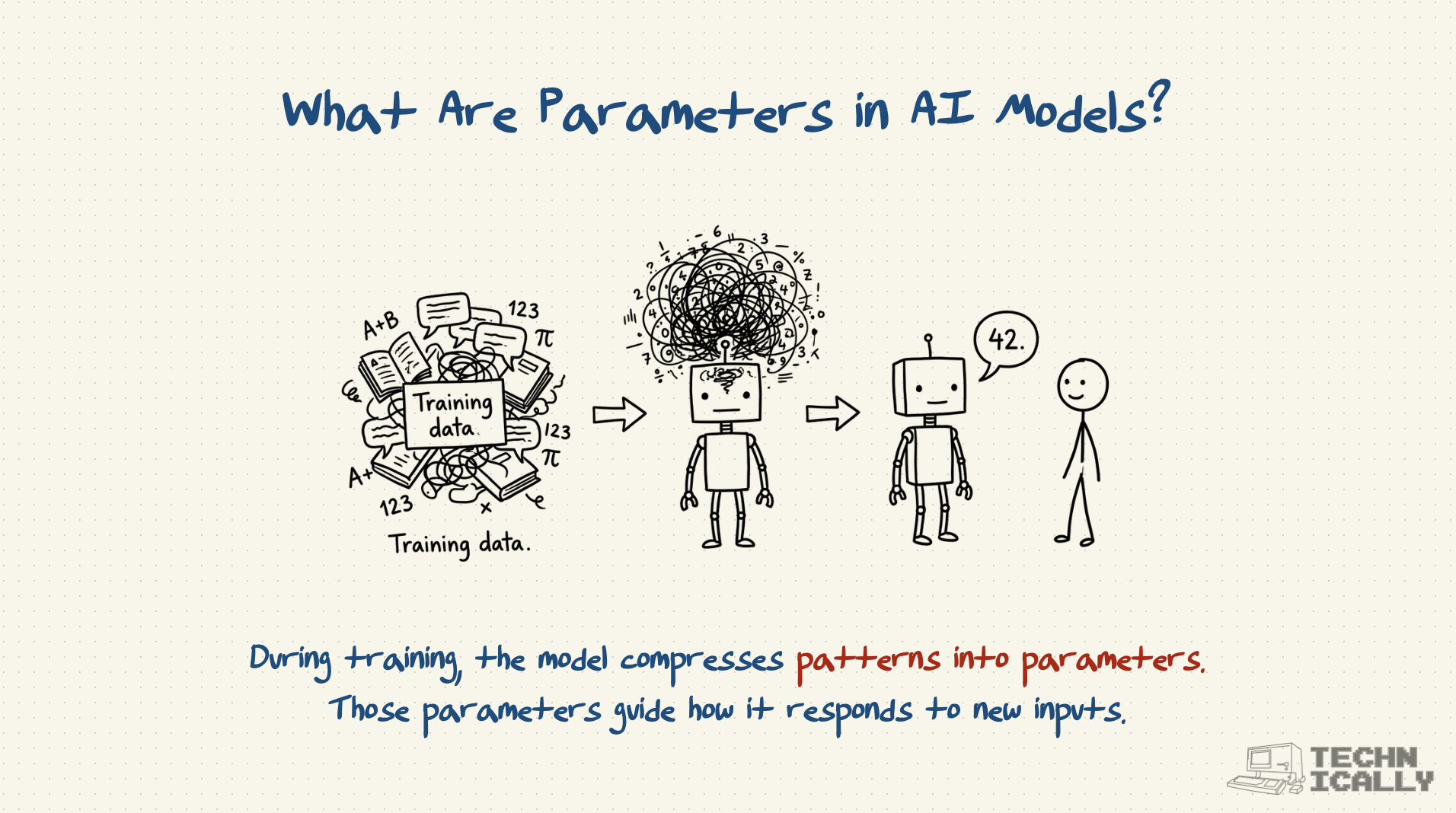

Parameters are the learned "knowledge" stored inside AI models - the numerical values that determine how the model responds to inputs.

- Think of them as all the facts, patterns, and associations the model learned during training

- More parameters generally mean more sophisticated capabilities but require more computing power

- Modern AI models have billions to trillions of parameters

- Like the difference between someone who's read 100 books versus 100,000 books

Parameters are why bigger AI models tend to be smarter, but also why they're more expensive to run.

What are parameters in AI models?

Parameters are the numerical values that an AI model learns during training - essentially the "knowledge" stored in the model's neural network. When you ask ChatGPT a question, it uses billions of these parameters to determine what response to generate.

So what is a neuron, exactly? You can think of it as a tiny piece of the equation. Each one is very simple: all it does is take a number, perform some mathematical calculation, and then spit it out the answer. And when you aggregate all of this across billions and billions of neurons, you have an AI model – the equation.

The parameters are the specific numbers that determine what calculation each neuron performs. During training, the model adjusts these parameters millions of times to get better at its task.

How many parameters do AI models have?

Today's neural nets have billions, if not trillions, of these little neurons to make up their equation.

Here's the scale progression:

- Early models (2010s): Millions of parameters

- GPT-1 (2018): 117 million parameters

- GPT-2 (2019): 1.5 billion parameters

- GPT-3 (2020): 175 billion parameters

- GPT-4 (2023): Estimated 1+ trillion parameters

- GPT-5 (2025): No official count, but suspected to be ~1.7–1.8 trillion parameters

For comparison, the human brain has roughly 100 trillion synapses - the biological equivalent of parameters.

Why do more parameters make models better?

The math performed by individual neurons is actually pretty simple – it's usually just basic multiplication and addition that you could do with a calculator. So how are AI models able to capture such complex patterns, like the ones involved in language and vision? The trick is to string together a lot of neurons – like hundreds of millions of them.

More parameters allow models to:

- Store more knowledge: Like the difference between a 100-page book and a 1,000-page encyclopedia

- Learn finer distinctions: Recognize subtle differences in writing styles, image details, or logical patterns

- Handle more complex tasks: Connect disparate concepts and perform multi-step reasoning

- Generalize better: Apply learned patterns to new situations they haven't seen before

But there are diminishing returns - doubling parameters doesn't double performance.

What's the relationship between parameters and model size?

More parameters = larger model = more computational requirements:

- Storage: GPT-3's 175B parameters require about 350GB of storage

- Memory: Running large models requires high-end GPUs with sufficient RAM

- Processing: More parameters mean more calculations per response

- Training cost: Larger models are exponentially more expensive to train

This is why most people use cloud APIs rather than running large models locally.

How do parameters affect AI performance?

Generally, more parameters lead to better performance, but with important caveats:

- Quality vs. Quantity: Well-trained smaller models can outperform poorly-trained larger ones

- Task dependency: Some tasks benefit more from scale than others

- Data requirements: Larger models need more training data to reach their potential

- Efficiency trade-offs: Smaller models are faster and cheaper to run

The "right" number of parameters depends on your specific use case and computational budget.

Do more parameters always mean better performance?

Not always. Model performance depends on several factors:

- Training quality: How well the parameters were optimized during training

- Data quality: Better training data leads to better parameter values

- Architecture: How the parameters are organized and connected

- Task fit: Some tasks need broad knowledge (more parameters), others need speed (fewer parameters)

A well-trained 7 billion parameter model might outperform a poorly-trained 70 billion parameter model on specific tasks.

How are parameters trained?

During training, the model:

- Makes a prediction using current parameter values

- Compares the result to the correct answer

- Adjusts parameters slightly to improve future predictions

- Repeats millions of times until performance stops improving

This process is like tuning a massive instrument with billions of knobs - each adjustment is tiny, but collectively they create sophisticated behavior.

Frequently Asked Questions About Parameters

What's the difference between parameters and hyperparameters?

- Parameters: Learned during training (the model's "knowledge")

- Hyperparameters: Set before training (learning rate, model size, training duration)

Think parameters as what the student learns, hyperparameters as how you teach them.

How much memory do parameters require?

Roughly 2-4 bytes per parameter for storage, plus additional memory for processing. A 7B parameter model needs about 14-28GB just to load, before doing any calculations.

Can you modify parameters after training?

Yes, through techniques like fine-tuning (adjusting parameters for new tasks) or pruning (removing less important parameters). But major changes usually require retraining.

Why don't models just keep getting bigger?

Diminishing returns and practical limits. Training costs grow exponentially, and beyond a certain point, better data and training methods matter more than raw parameter count.

What determines how many parameters a model needs?

The complexity of the task, available training data, and computational budget. Simple tasks might need millions of parameters, while general intelligence might require trillions.