A token is the basic unit of a Large Language Model's vocabulary.

- It's how AI models break down text into digestible pieces for processing

- Tokens aren't quite the same as words—one word might be multiple tokens

- Token limits (or windows) determine how much text an AI can work with at once

- Most AI pricing is based on token usage, so understanding them affects your costs

Think of tokens as the individual LEGO blocks that AI models use to build language—smaller pieces that can be combined to create complex structures.

What is a token?

A token is the basic unit of a Large Language Model's vocabulary.

Here's why tokens exist: AI models can't work directly with letters and words the way humans do. They need everything converted into numbers first…after all, they’re just machines under the hood. Tokens are the bridge between human language and the mathematical representations that AI models actually understand.

Armed with a more ergonomic representation of words and text, neural networks can learn all sorts of important stuff about text, like:

- Semantic relationships between words

- Bringing in more context (sentences before and after a word, or sentence)

- Figuring out which words are important and which aren’t

Understanding tokens matters because they enable the mathematical transformations that power modern AI language processing.

How do AI models use tokens?

AI models use tokens as the fundamental building blocks for all language processing:

Input Processing

When you send a message to an AI, the first step it does is converting your text into tokens. Each token gets transformed into a mathematical vector that captures its meaning and relationships to other tokens.

Pattern Recognition

The model analyzes patterns in these token sequences based on everything it learned during training. It identifies relationships between tokens and tries to understand context.

Generation

When generating responses, the model predicts the most likely next token based on the context of previous tokens, then repeats this process to build complete responses.

Context Management

The model keeps track of all the tokens in your conversation to maintain context and coherence across multiple exchanges.

OK, but what are these actual tokens logistically?

I mentioned earlier that one token does not exactly always equal one word.

Subword Units

Tokens often represent parts of words rather than complete words. The word "understanding" might become tokens like "understand" + "ing" or even "under" + "stand" + "ing" depending on the model.

Consistency Across Languages

The same tokenization approach works for English, Spanish, Chinese, and other languages, allowing models to work multilingually.

Handling Special Cases

Tokens can represent punctuation, spaces, numbers, special characters, and even concepts that don't correspond to traditional words.

Mathematical Efficiency

Each token maps to a specific position in the model's mathematical vocabulary, allowing for consistent numerical processing.

What's the difference between words and tokens?

This is where things get interesting—and sometimes frustrating if you're trying to manage costs or context limits:

Simple Cases:

- "cat" = 1 token

- "dog" = 1 token

- "run" = 1 token

Complex Cases:

- "understanding" = 2 tokens ("understand" + "ing")

- "ChatGPT" = 2 tokens ("Chat" + "GPT")

- "e-mail" = 3 tokens ("e" + "-" + "mail")

- "🚀" = 1 token (yes, emojis count!)

Tricky Examples:

- " hello" (with spaces) = 2 tokens (spaces + "hello")

- "123.45" = 3 tokens ("123" + "." + "45")

- "AI/ML" = 4 tokens ("AI" + "/" + "ML")

Language Variations

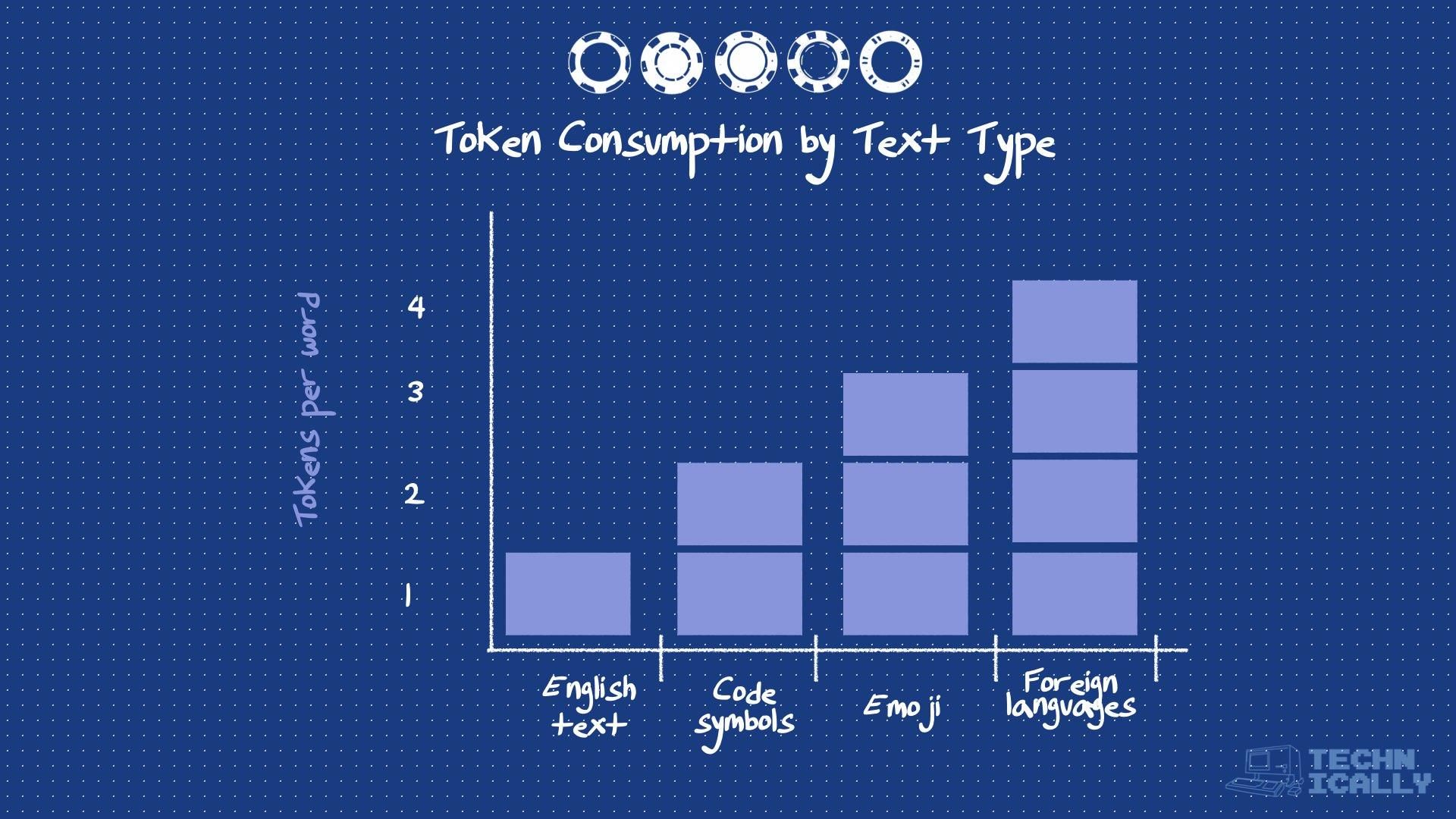

Some languages are more "token-efficient" than others. English typically uses fewer tokens per concept than languages with complex writing systems.

The general rule of thumb is that common English text uses about 3-4 tokens per word, but this varies dramatically based on content type.

How are tokens counted?

Token counting happens automatically, but understanding the process helps you optimize your AI usage:

Preprocessing

Text gets cleaned and standardized before tokenization—handling things like unusual Unicode characters or formatting.

Tokenization Algorithm

Most modern models use algorithms like Byte Pair Encoding (BPE) that break text into the most efficient subword units based on training data patterns.

Vocabulary Lookup

Each token gets mapped to a specific ID in the model's vocabulary (usually a number between 0 and a maximum that typically ranges from 30,000 to over 250,000, depending on whether the model is monolingual or multilingual).

Context Assembly

All the tokens from your conversation get combined into a single sequence that the model processes together.

Tool Availability

Most AI providers offer token counting tools so you can estimate usage before sending requests. OpenAI has a tiktoken library, for example.

What determines token limits?

Token limits are determined by the AI model's architecture and the computational resources available:

Model Architecture

The "context window" is built into the model's design. GPT-3.5 handles about 4,000 tokens, while GPT-4 can handle 8,000-128,000 depending on the version. GPT-5 significantly expands this, offering up to 400,000 tokens via the API, though the limit for ChatGPT users is generally between 32,000 and 128,000 tokens, depending on their subscription tier and the specific model variant used (like the 'Thinking' or 'Fast' mode). Anthropic's Claude models (like Opus and Sonnet), on the other hand, typically offer a 200,000-token context window, with API and Enterprise versions extending to 500,000 or even 1 million tokens.

Memory Constraints

Processing longer token sequences requires more memory and computational power, which increases costs exponentially.

Quality Trade-offs

Models generally perform better with shorter contexts where they can focus on the most relevant information.

Recent Improvements

Newer models are dramatically increasing context windows—from 4,000 tokens to over 1 million in some cases—making this less of a practical limitation.

How much do tokens cost?

Token-based pricing is how most AI services charge for usage, and costs vary significantly:

Typical Pricing Structure

- Input tokens (what you send): Usually cheaper

- Output tokens (what the AI generates): Usually more expensive

- Premium models: More expensive per token but often higher quality

Example Costs (as of 2025):

- GPT-3.5 Turbo: ~$0.0005 input / $0.0015 output per 1,000 tokens

- GPT-5 (base): ~$0.00125 input / $0.010 output per 1,000 tokens

- Claude (Sonnet / Opus): ~ $0.003 input / $0.015 output per 1,000 tokens (Sonnet 4) up to $0.015 / $0.075 (Opus 4)

Hidden Costs:

Remember that longer conversations accumulate tokens from the entire chat history, not just new messages. This is why Claude docs will often recommend starting an entire new chat unless the chat history is crucial for your task.

Token optimization strategies

Understanding tokens helps you use AI more efficiently and cost-effectively:

Prompt Optimization

- Remove unnecessary words and filler

- Use bullet points instead of full sentences when appropriate

- Avoid repetitive instructions

Content Structure

- Front-load the most important information

- Use clear, direct language

- Break complex requests into smaller parts

Conversation Management

- Start new conversations for unrelated topics

- Summarize long conversations periodically

- Remove irrelevant context when possible

Format Choices

- JSON and structured formats can be more token-efficient than prose

- Tables and lists often use fewer tokens than paragraphs

- Code snippets are usually more efficient than explaining code in words

Frequently Asked Questions About Tokens

Why do some characters use more tokens than others?

It all comes down to how common different character patterns were in the model's training data. Common English words get efficient single-token treatment, while weird punctuation, emoji, and non-English text can be surprisingly "expensive" token-wise. This is why copying and pasting code or foreign language text can eat through your token budget faster than you'd expect.

Can you see exactly how text gets tokenized?

Yep! Most AI providers have tools that show you exactly how your text breaks apart. OpenAI has tiktoken, and there are online tools that visualize the whole process. Super helpful when you're trying to figure out why your API bill is higher than expected.

Do tokens affect AI quality?

Sort of. When you hit token limits, the AI starts "forgetting" earlier parts of your conversation, which can make responses less coherent. Also, text that gets chopped into lots of tiny tokens can be harder for the model to understand properly—like trying to read a sentence where every word is broken into syllables.

What happens when you go over the token limit?

Depends on the provider. Some just cut off the beginning of your conversation (goodbye, context!), others refuse your request entirely, and some try to automatically summarize what came before. None of these options are great, so it's better to manage your token usage upfront rather than hit the ceiling unexpectedly.