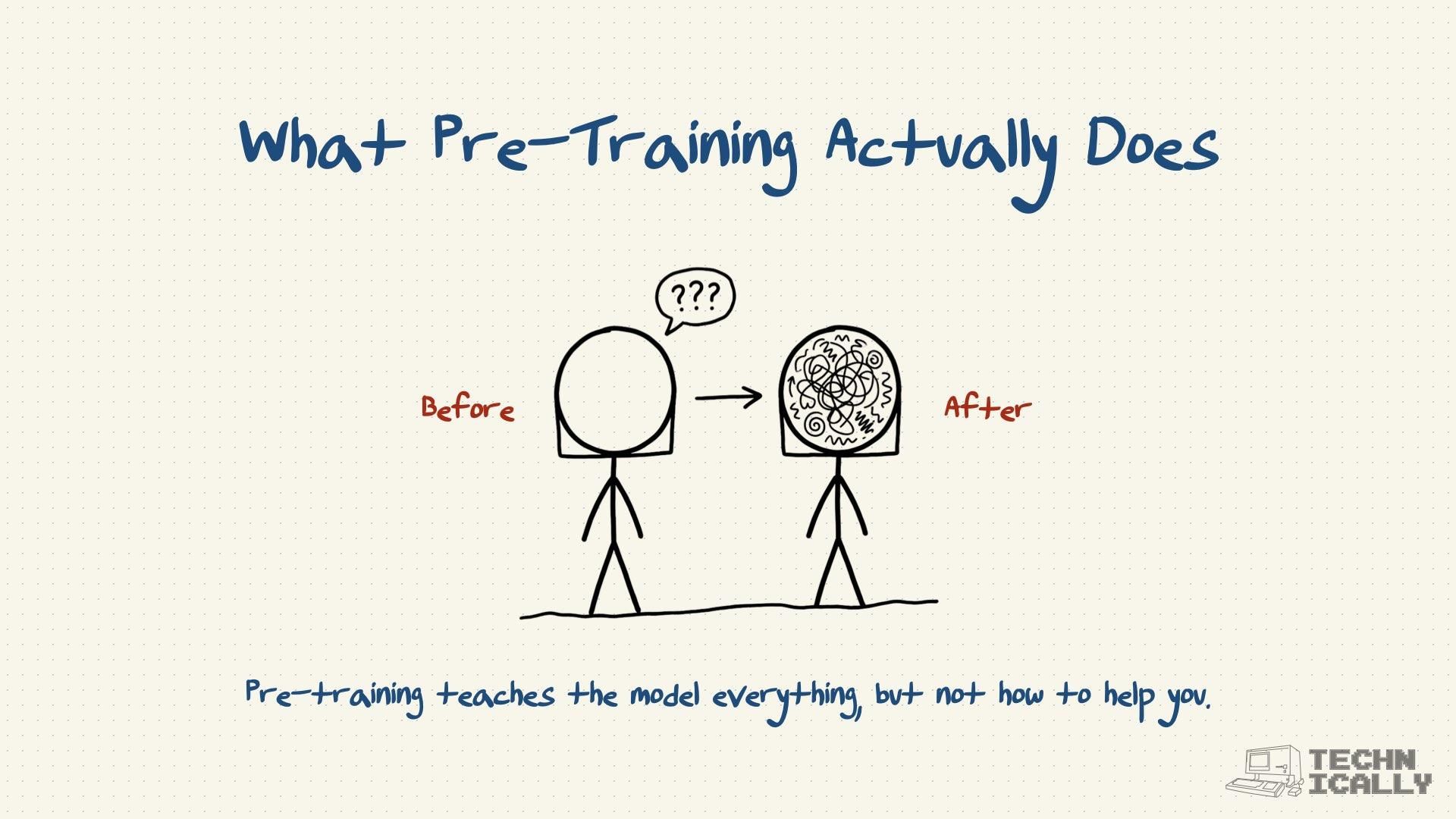

LLM post-training turns a model from a knowledgeable blob that produces rambling answers, into a helpful assistant.

- Happens after pre-training, when the model already knows lots of facts

- Includes two main steps: instructional fine-tuning and RLHF

- Teaches the model how to be helpful, not just knowledgeable

- Makes the difference between a walking encyclopedia and a useful AI assistant

Post-training is what transforms raw intelligence into something you'd actually want to chat with.

What is post-training?

Post-training is everything that happens after pre-training to make an AI model actually useful. Think of pre-training as giving someone a PhD in everything, and post-training as teaching them how to be a good teacher, friend, or assistant.

After pre-training, you have a model that knows an incredible amount about the world but has terrible social skills. Ask it "How do I make coffee?" and it might give you a 2,000-word treatise on the history of caffeine cultivation. Technically correct, but not exactly helpful.

Post-training fixes this by teaching the model two crucial skills: how to follow instructions and how to be helpful.

What are the steps in post-training?

To train something as special and complex as ChatGPT (a large language model), you're looking at 3 distinct steps:

- Pre-training: giving the model foundational knowledge from the internet, by playing the sentence re-arranging game.

- Instructional fine-tuning: making the model more concise and helpful by training it on question answer pairs.

- RLHF: improving the quality of the model's responses by integrating human feedback.

Steps 2 and 3 are what we call "post-training" — they're both about refining the pre-trained model to make it actually useful for real conversations.

How does instructional fine-tuning work?

The first step in post-training teaches the model how to follow instructions and give helpful responses. Instead of predicting random next words, the model learns to:

- Answer questions directly instead of going on tangents

- Match the tone of the conversation

- Provide appropriate detail (not too much, not too little)

- Follow specific instructions like "write a poem" or "explain this simply"

This happens through training on thousands of carefully crafted question-answer pairs that show the model what good responses look like.

How does RLHF work?

The second step in post-training uses human feedback to align the model with human preferences and values. Human reviewers rate different AI responses, and the model learns to prefer outputs that humans find helpful, harmless, and honest.

This is what makes ChatGPT feel more "human-like" in its responses — it's learned not just what's factually correct, but what's actually useful and appropriate in conversation.

What's the difference between pre-training and post-training?

The difference is like the difference between cramming for a test and learning how to teach:

Pre-training: "Read everything on the internet and memorize it"

- Creates vast knowledge but poor communication skills

- Model knows facts but doesn't know how to be helpful

- Responses are technically accurate but often useless

Post-training: "Learn how to package that knowledge helpfully"

- Teaches conversational skills and social awareness

- Model learns what humans actually want to hear

- Responses become concise, relevant, and genuinely useful

Here's a concrete example:

Question: "I'm feeling stressed about work"

Pre-trained model: "Stress is a psychological and physiological response to perceived threats or challenges, historically evolutionary advantageous for survival but in modern contexts often maladaptive, characterized by elevated cortisol levels and activation of the sympathetic nervous system..."

Post-trained model: "I'm sorry to hear you're feeling stressed. Here are a few quick things that might help: take some deep breaths, step away from your desk for a few minutes, or try writing down what's bothering you. Would you like to talk about what's causing the stress?"

Same knowledge base, completely different approach to being helpful.

Why is post-training necessary?

Because knowing facts and knowing how to communicate are completely different skills. A pre-trained model is like a brilliant professor who can't stop lecturing — incredibly knowledgeable but exhausting to talk to.

Post-training teaches the model:

- Audience awareness: Is this person a beginner or expert?

- Context sensitivity: What kind of response does this situation call for?

- Practical focus: What does this person actually need to know?

- Conversational flow: How do you build on what was said before?

- Safety considerations: How do you avoid harmful or inappropriate responses?

Without post-training, even the most knowledgeable AI would be practically useless for real conversations.

How long does post-training take?

Much less time than pre-training, but it's more expensive per example because it requires human involvement:

- Instructional Fine-tuning: Days to weeks (vs. months for pre-training)

- RLHF: Additional days to weeks of human feedback collection and training

The time is shorter, but the cost per training example is much higher because humans have to create question-answer pairs and provide ratings rather than using automatically generated examples.

Can you skip post-training?

Technically yes, but you probably wouldn't want to use the result. Companies sometimes release "base" pre-trained models for researchers, but they're more like raw materials than finished products.

Without post-training, you get an AI that:

- Gives overly verbose, unfocused responses

- Doesn't follow instructions reliably

- May produce inappropriate or harmful content

- Feels robotic and unhelpful in conversation

Post-training is what makes the difference between a research curiosity and a consumer product.

Frequently Asked Questions About Post-training

Is post-training a one-time process?

No, it's ongoing. Companies continuously collect user feedback and periodically retrain their models with new human preference data. As they discover edge cases or areas for improvement, they create new training examples to address them.

Do all language models need post-training?

Any model intended for human interaction does. If you're building a language model for a specific technical task (like code completion), you might use different post-training approaches, but some form of alignment with human expectations is usually necessary.

How do you measure post-training success?

Through human evaluation. Companies use human reviewers to rate responses on helpfulness, accuracy, safety, and other criteria. They also monitor real user interactions and feedback to see how well the post-trained model performs in practice.

Can post-training make a model worse?

If done poorly, yes. Inconsistent human feedback, biased training examples, or overly restrictive safety measures can make models less helpful or introduce new problems. This is why companies invest heavily in training their human reviewers and establishing clear guidelines.